The decision by xAI to implement restrictive filters on Grok’s image generation capabilities represents a fundamental shift from "maximalist free speech" to "operational risk mitigation." This transition is not merely a response to public outcry; it is a calculated adjustment to the cost-benefit ratio of generative AI deployment. When an AI model produces non-consensual sexual imagery (NCII) or brand-damaging deepfakes, the platform incurs three distinct forms of debt: regulatory friction, compute inefficiency, and advertiser flight.

For Grok, the friction point emerged when the friction between the model’s "unfiltered" USP (Unique Selling Proposition) and the reality of platform liability became unsustainable. The following analysis deconstructs the mechanisms of this restriction and the strategic implications for the xAI roadmap.

The Architecture of Generative Friction

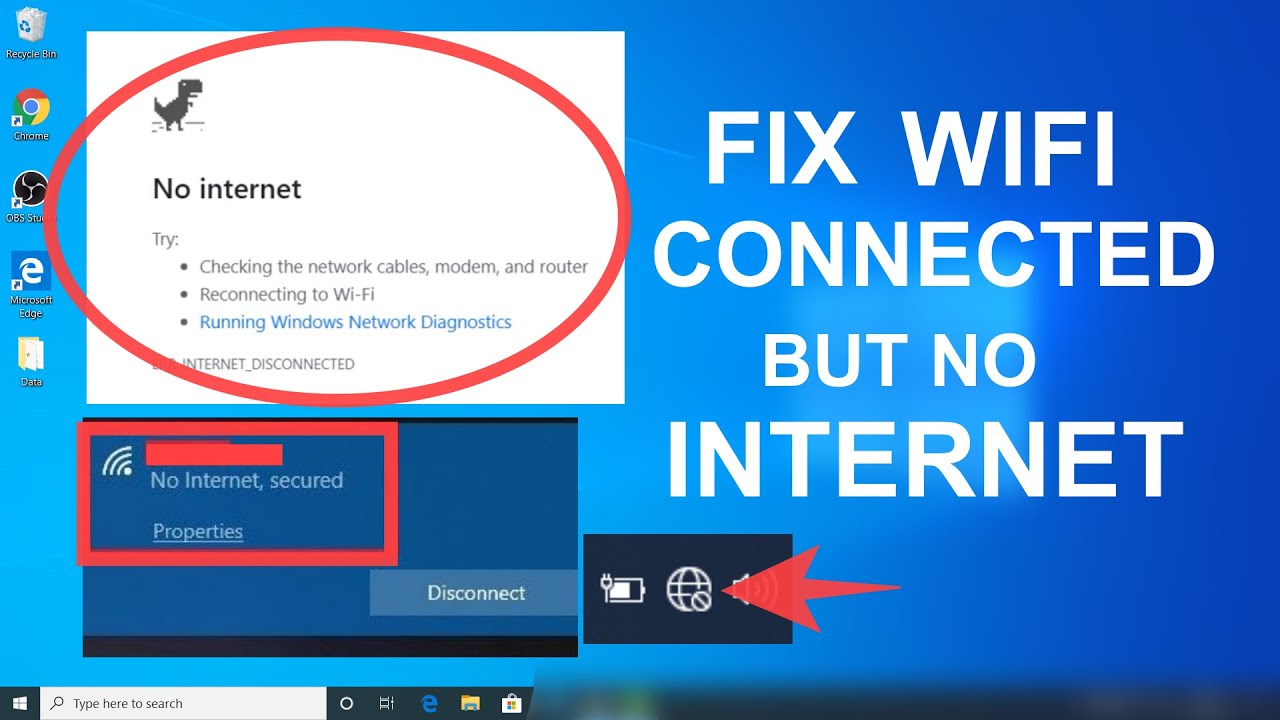

Content moderation in large-scale diffusion models like Flux—the underlying engine for Grok’s image generation—is not a binary switch. It functions as a multi-layered filtration stack. The initial "unfiltered" release of Grok-2 relied heavily on prompt-level blocking, a superficial layer that is easily bypassed through "jailbreaking" or linguistic obfuscation.

The move toward stricter restrictions indicates an evolution into three specific technical layers:

- Input Vector Sanitization: The system analyzes the latent intent of a prompt before it reaches the diffusion engine. By mapping keywords and semantic clusters to a "restricted" coordinate space, the model can preemptively refuse generation.

- Safety Guidance During Inference: This involves steering the denoising process. If the model begins to converge on a representation that matches a "human-likeness" or "explicit" heuristic, the guidance scale is adjusted to introduce noise or trigger a failure state.

- Output Classification Pipelines: A secondary vision model scans the generated pixels. If the probability of prohibited content exceeds a specific threshold (e.g., $P > 0.85$), the image is suppressed before the user ever sees it.

This stack creates a trade-off between Model Utility and Safety Overhead. Every additional filter increases latency and requires more GPU cycles, effectively raising the "Marginal Cost per Safe Image."

The Economic Reality of Brand Safety

The "anti-woke" positioning of xAI was designed to capture a specific market segment: power users and developers frustrated by the over-indexing of safety in models like Google’s Gemini or OpenAI’s DALL-E. However, the business model of X (formerly Twitter) remains tethered to advertising revenue, even as it pivots toward subscriptions.

Advertisers do not view "free speech" as a feature; they view it as an environmental risk. When Grok-generated imagery of public figures in compromising or explicit contexts circulates on the same timeline as a corporate ad, the "Adjacency Risk" triggers a withdrawal of capital.

xAI’s restriction pivot reveals a core tension:

- The Libertarian Model: Low censorship, high user engagement, high legal and PR volatility.

- The Enterprise Model: High censorship, predictable output, lower legal liability, attractive to blue-chip advertisers.

By restricting sexual image generation, xAI is attempting to bridge these models—maintaining the "edgy" brand persona while installing the "guardrails" necessary to prevent existential legal threats from the EU’s Digital Services Act (DSA) or potential US state-level legislation regarding deepfakes.

Quantifying the Risk of Unconstrained Diffusion

The primary risk for xAI was not the existence of the content itself, but the Velocity of Propagation. On X, an AI-generated image can reach millions of impressions within minutes. This creates a feedback loop that traditional human-in-the-loop moderation cannot contain.

We can define the risk function as:

$$R = (V \times A) / T_m$$

Where:

- $V$ is the Velocity of content spread.

- $A$ is the Alphanumeric Accuracy (the realism of the deepfake).

- $T_m$ is the Time to Moderation.

When $T_m$ is high and $V$ is near-instant, the reputational damage is irreversible. The global outcry mentioned in news cycles served as the "canary in the coal mine," signaling that the $T_m$ for Grok’s automated systems was insufficient to handle the $V$ of its user base.

The Technical Debt of Rapid Deployment

The "unfiltered" nature of early Grok iterations was likely a symptom of rapid development cycles rather than a permanent philosophical choice. Training a safety-aligned model takes significantly longer than training a raw one. The "Red Teaming" phase—where researchers attempt to break the model to find vulnerabilities—was compressed to meet launch windows.

The subsequent restrictions are a form of "hotfixing" the model’s social alignment. This creates two technical challenges:

- False Positives: Over-aggressive filters may block benign artistic content (e.g., classical statues or medical illustrations), frustrating the core user base.

- Catastrophic Forgetting: If the model is fine-tuned too heavily to avoid certain topics, it may lose its ability to generate complex, nuanced prompts in unrelated fields.

Structural Implications for the AI Market

The Grok pivot proves that there is no "Third Way" in generative AI. Every major player, regardless of their stated mission, eventually converges on a similar set of restrictions. This convergence is driven by three external pressures:

- App Store Dominance: Distribution via iOS and Android requires adherence to strict "Not Safe For Work" (NSFW) policies. If Grok remained a tool for generating explicit content, the X app would face de-platforming.

- Compute Provider Policies: Most high-end H100 clusters are leased through providers (like Oracle or AWS) who have their own Acceptable Use Policies.

- Insurance and Liability: Insuring a tech company that knowingly facilitates the creation of NCII is prohibitively expensive or impossible.

The Strategic Path Forward

To maintain its competitive edge while adhering to these new constraints, xAI must shift its focus from "unfiltered" to "hyper-personal."

The next logical move is the implementation of Edge-Based Processing or User-Level Sovereignty. If the heavy lifting of image generation is moved to the user's local hardware (where possible) or gated behind strict identity verification, xAI can offload the liability of the content generated. However, as long as the generation happens on xAI's servers and is displayed on the X platform, the "Restrictive Filter" is a permanent requirement.

The era of the "Wild West" in generative AI is closing. The winners will not be those who allow everything, but those who can define the boundary between "creative freedom" and "platform liability" with the highest precision. For xAI, the "Masterclass" in this space is no longer about removing filters—it is about building the most sophisticated, invisible filters that protect the platform without alienating the power user.